|

11/6/2023 0 Comments Robots txt example

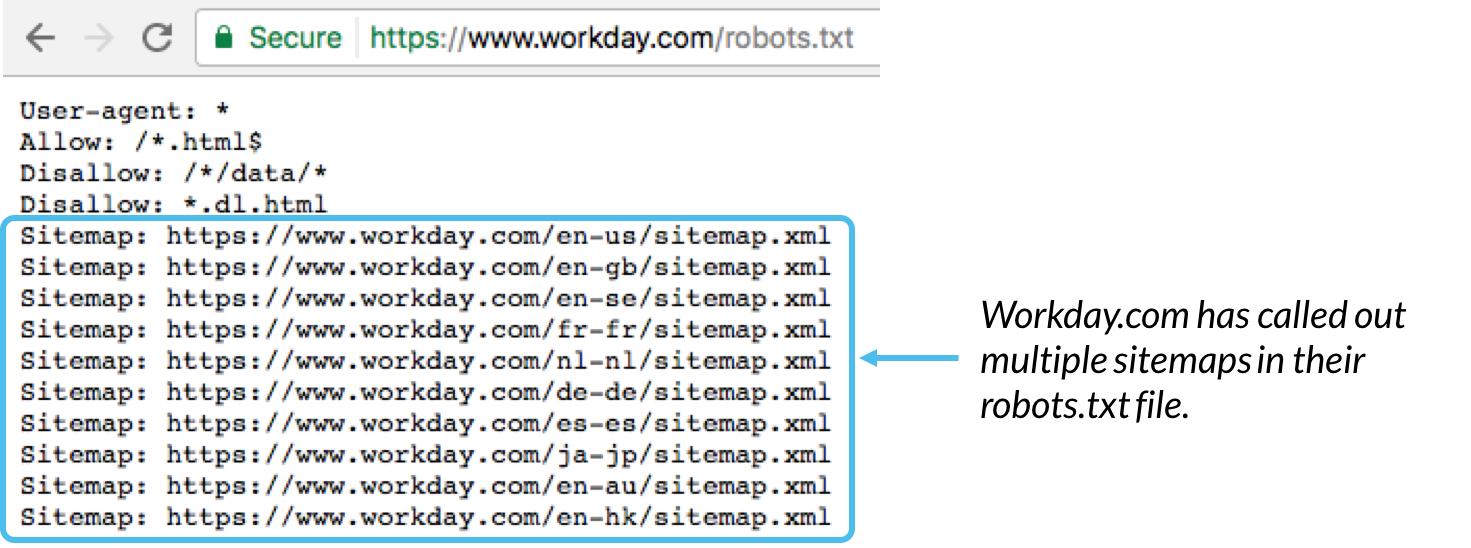

If Google sees that their crawling of a site slows down that URL, and thus hurts the user experience for any organic browsers, they will slow the rate of the crawls. Google calculates this budget based upon a crawl rate limit and crawl demand. This describes the amount of time that Google will dedicate to crawling a particular site. Understanding how Google crawls websites will help you see the value in using robots.txt protocol. If this portion remains blank, then the spiders can crawl the entire site. If there is a "\", it means that the spiders should not crawl anything on the site. Under the "user-agent" it will say, “disallow.” This tells the robots what they cannot do. The first line says, "user-agent." This portion of the protocol dictates who the instructions apply to, and an asterisk " *", usually referred to as a wildcard, will mean that the command applies to all the web robots.

When writing out your protocol file, you will use simple, two-line commands. It can seem counterintuitive for a site to want to instruct a search engine not to crawl its pages, but it can also give webmasters powerful control over their crawl budget. When a search engine lands on a site, it looks at the command for instructions.

Robots.txt is a series of commands that tells web robots, usually search engines, which pages to crawl and not to crawl. This is a common agreement among the different search engines thus making the commands a potentially valuable, but often overlooked, tool for brands in their SEO reports. The current versions of the protocol will be accepted by the spiders and the major search engines to send out to gather information for their respective ranking algorithms. Since then the robots.txt has grown to meet the needs of modern web designers and website owners. A file containing directions on which site sections should be crawled and which shouldn't offered site owners the promise of having more control over which crawlers could visit their URLs and how much capacity they were allowed to consume. Some webmasters became concerned about which spiders were visiting their sites. The origins of the robots.txt protocol, or the "robots exclusion protocol," can be traced back to the mid-1990s, during the early days of web spiders traveling the internet to read websites.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed